Free Databricks Certified Associate Developer for Apache Spark 3.5 Exam Practice Questions

Databricks Certified Associate Developer for Apache Spark 3.5 - Python

Total Questions: 135Databricks Certified Associate Developer for Apache Spark 3.5 Exam - Prepare from Latest, Not Redundant Questions!

Many candidates desire to prepare their Databricks Certified Associate Developer for Apache Spark 3.5 exam with the help of only updated and relevant study material. But during their research, they usually waste most of their valuable time with information that is either not relevant or outdated. Study4Exam has a fantastic team of subject-matter experts that make sure you always get the most up-to-date preparatory material. Whenever there is a change in the syllabus of the Databricks Certified Associate Developer for Apache Spark 3.5 - Python exam, our team of experts updates Databricks Certified Associate Developer for Apache Spark 3.5 questions and eliminates outdated questions. In this way, we save you money and time.

Databricks Certified Associate Developer for Apache Spark 3.5 Exam Sample Questions & Answers

A data scientist is analyzing a large dataset and has written a PySpark script that includes several transformations and actions on a DataFrame. The script ends with a collect() action to retrieve the results.

How does Apache Spark's execution hierarchy process the operations when the data scientist runs this script?

An engineer has a large ORC file located at /file/test_data.orc and wants to read only specific columns to reduce memory usage.

Which code fragment will select the columns, i.e., col1, col2, during the reading process?

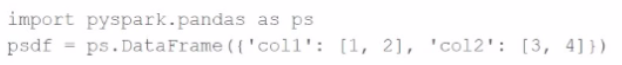

Given the code fragment:

import pyspark.pandas as ps

psdf = ps.DataFrame({'col1': [1, 2], 'col2': [3, 4]})

Which method is used to convert a Pandas API on Spark DataFrame (pyspark.pandas.DataFrame) into a standard PySpark DataFrame (pyspark.sql.DataFrame)?

A Spark engineer is troubleshooting a Spark application that has been encountering out-of-memory errors during execution. By reviewing the Spark driver logs, the engineer notices multiple "GC overhead limit exceeded" messages.

Which action should the engineer take to resolve this issue?

22 of 55. A Spark application needs to read multiple Parquet files from a directory where the files have differing but compatible schemas. The data engineer wants to create a DataFrame that includes all columns from all files.

Which code should the data engineer use to read the Parquet files and include all columns using Apache Spark?

Currently there are no comments in this discussion, be the first to comment!